The Power of Structure

Welcome to Project Mage!

The purpose of this article is twofold:

describe an intent to build a powerful Common Lisp environment and a set of applications, all primarily centered around

- seamlessly-structural text editing,

- interface building,

- knowledge-keeping,

and

- signal a request to the community to reach (a rather moderate) monthly funding goal for the said project.

Overall, the goal is to build a set of text+GUI-driven platforms with highly-interactive and customizable applications, all comprising an efficient environment for the power-user.

I estimate it will take about 5 years to fulfill the intent.

You can find the campaign details here.

This article aims to explain what's special about this project, who will find it useful, what goals it sets, and what ideas motivate it.

I am building this project not just as a programmer looking to improve his tools, but primarily as a power user. And power users are, really, the intended audience. Regular users could reap the project's fruit later on, but this campaign is not aimed at them.

There are also a few essays/articles that follow this one where I lay out my thoughts on Emacs, text editing, flexibility, web and a few other things. Don't hesitate to start with those writings and come back later, if you wish – you will find them all listed at Home. Particularly, if you are an Emacs user, you may find it appetizing to read Emacs is Not Enough first.

TLDR: If you want to get just the gist about the project's structural approach to text, please check out The Elevator Pitch article. Also, if you don't want to get bogged down in the technical detail, then SKIP the Fern section and go straight for Rune or any other section that is of most interest to you (but still, do finish reading this intro!).

The campaign for Project Mage aims to build the following:

- Fern

- An advanced GUI platform.

- Knowledge-Representation Prototype OO system (KR) (from CMU's Garnet1 See the home page here. The latest Garnet repository may found here. GUI project).

- Multi-way constraints (UW's Multi-Garnet).

- Contexts, configurations, an advice facility; full customization and flexibility at runtime.

- Relative simplicity.

- Rune

- A platform for building seamless structural editors.

- Purpose: Define a specification for lenses.

- Stands for: Rune is Not an Editor.

- Based on Fern.

- Kraken

- A versatile Knowledge-Representation platform.

- Purposes: note-taking, computational notebooks / literate programming, programmable knowledge bases.

- Based on Rune.

- Alchemy

- A seamlessly-structural IDE for lisp-like languages.

- The only target, for now, is Alchemy CL.

- Based on Rune.

- Alchemy CL

- Alchemy for Common Lisp.

- Codename: Snide.

- Ant

- A sane alternative for modern terminal emulators.

- Purpose: REPL interfaces, advanced command-line interfaces.

- Stands for: Ant is Not a Terminal.

- Based on Rune and Fern.

- Hero

- A project knowledge tracker / bug tracker / decentralized version control system.

- Purpose: substitute Git, GitHub and the like.

- Based on Kraken.

In this article, I give the description of all these systems and my motivation for writing them.

To me, the most interesting development on the list is Rune. It embraces the fact that in order for us to work with information efficiently (both in speed and comfort), we need to start taking the structure of textual information a little more seriously than what is usually presumed by most contemporary text editors.

You will find Project Mage most useful if you are:

- a programmer,

- a serious user of note-taking applications,

- a user of terminal emulators,

- a user of text-editors (Vim, Emacs or any other),

- someone who collects and works with a lot of data,

- someone who finds the current establishment of text editors, terminal emulators, IDEs, note takers, bug trackers and computational notebooks to be supremely annoying,

- or, overall, consider yourself a power user.

This is a long article, so feel free to jump around and read about what interest you most, but I would urge you first to read the Who is this project for? and The Project's Philosophy (all soon below this section), as well as, at least, skim the sections on Fern and Rune.

All the software will be free (as in beer and as in freedom), see the License section.

The anticipated timeline for the development (provided stable financial support from the community) is as follows:

- Year 0.0 – 1.0: Fern.

- Year 1.0 – 2.5: Rune, Kraken.

- Year 2.5 – 4.0: Alchemy CL, Ant.

- Year 4.0 – 5.0: Hero.

- Year 5.0 – 5.5: Reserved for contingencies.

Naturally, these are only rough guidelines. If in the course of the development I see a much better way to rebuild something at the cost of a few months, that's what likely will happen. I don't imagine any system to fall squarely into the estimate. The estimates mostly show where I expect most of the work to fall into.

I am pretty sure I can get done in ~5 years. In the end, the estimate assumes quality, because I am fairly certain the core functionality will take a bit less than that. So, there are a lot of corners to cut features that can be postponed till better times if I feel like something is getting out of hand.

My main goal is to provide a platform which can be improved upon by others. A vertical slice of sorts, but with some beef to it. And I will make the applications at least usable to myself, and that includes most of the features listed in this article.

Keep in mind that there might be year 6 for dealing with any excessive optimism about the 5-year timeline. I have written software before, so I am no stranger to underestimation. But worry not. I started at 3 years thinking of something rather rudimentary and then bumped that to 4. And then to 5, just to make sure. So, hopefully, the beginning of the sixth year will only be for some final touches, or, better yet, for maintenance and work with the community.

So, look at it this way: it's done when it's done; but hopefully in 5 years. I talk more about this in the section How Realistic is the Time Estimate?.

I will be posting a brief summary of progress about once a month, on this website. This, of course, assumes I can work on the project in the first place, which depends on the funding success of the campaign.

I have also set up a mailing list in case someone wants to have a discussion or ask me anything. See the Contacts page.

All the software in the project will be written in Common Lisp – see the Why Common Lisp article.

Table of Contents

- Who is this project for?

- The Project's Philosophy

- Project's Goals

- Fern: A GUI Toolkit partially implemented

- Rune: A Platform for Constructing Seamless Structural Editors

- Kraken: KR-driven Programmatic Knowledge

- Alchemy: A Structural IDE for Lisp-like Languages

- Alchemy CL: A Structural IDE for Common Lisp

- Ant: Ant is Not a Terminal

- Hero: A Decentralized Version-Controlled Tracker of Development Knowledge

- An Ecosystem

- Does This Project Aim to Replace Emacs?

- Bindings?

- How Realistic is the Time Estimate?

- Documentation Goals

- Accessibility

- Target Platforms

- It's a Process

- Why This Project?

- Code

- Campaign Details

- License

Who is this project for?

This project is for power users. The type of people who get easily annoyed at doing things the wrong or slow or in-some-manner inefficient way.

Being able to program helps a lot with being a power user, but a lot of people can configure a program like Emacs without any extensive knowledge of programming. Most of the time, it's just a copy-paste of a few lines of configuration, or changing some value. It's not that hard, and especially not if there's the right kind of UI for it (developing such UI may be beyond the scope of the campaign, but I see no problem in doing it).

As such, I don't see the potential users of the system to be just programmers. Anybody who is interested in their tools should be able to pick up on any Mage application fairly easily. I will try to make all the applications usable out of the box.

The project (within the limits of the campaign) aims to provide alternatives to, or a gateway to replace:

- advanced text editors (e.g. Emacs),

- specialized text editors (e.g. HEX editors, CSV editors etc.)

- command lines (such as those of terminal emulators),

- Sly/Slime (Common Lisp IDEs for Emacs),

- computational notebooks (e.g. Jupyter),

- note-taking applications (e.g. Roam Research, Org-mode)

- version-control systems (e.g. Git, mercurial),

- bug trackers (e.g. GitHub),

while providing a new, powerful and fun-to-use GUI library, capable of constraint management, contextual customization and much more.

That's a lot of applications, and it may seem incredible for the 5-year timeline I have claimed. But think of these as parts of a unified platform, and you will start to see that there's a huge amount of overlap in the functionality of all of these. (But, I take this discussion further in How Realistic is the Time Estimate?)

But the key word here is: integration. All the applications are a part of the same system, and that provides not just for the capability of reuse, but also for a high degree of flexibility and customization power.

And Project Mage doesn't merely replace these programs to unify them under the same umbrella, it often tracks and eliminates their common fundamental flaw: their disregard for the structure of the data that they are operating on.

By the closing time of the project I aim to establish a ground for a community to form. Hero will be instrumental to this end. But I will also set up a public repository specifically for everything Mage. For more details, see the Ecosystem section.

The Project's Philosophy

Interactivity

Common Lisp is a top-notch interactive platform.

It lets you work in a live image. You can evaluate and run code without reloading. Any class, any object, or any function may be modified at runtime, at your will.2 A notable exception on some implementations of Common Lisp is trying to change struct definitions that have live instantiations, in which case the behavior is typically undefined and a warning is issued – all due to the optimizations that apply to structs. On top of that, there is a powerful condition system that lets you fix errors and exceptional situations; inspecting the stack frame and changing their local bindings is something you can do quite routinely.

If you have programmed Smalltalk or Emacs or most other lisps, you probably know what this is like.

In some discussions online, this quality of interactive development is often referred (and thus reduced) to something called a Read-Eval-Print-Loop (REPL), as in: an awkward command line prompt. Contrary to that perception, REPL development may happen in a source file by repeatedly evaluating whichever expression you are working on at the moment.

Image-based programming is important not only because of comfort, but also because of valuable state that you might not want to lose. When you are working with unsaved data, or editing a text document, or churning through a bunch of processed logs, being forced to reload the whole system is annoying, costly and at times simply unacceptable.

Hackability

It's not enough to use an image-based programming language, even the kind that lets you change any component of the system, in order for your library/platform/program to be meaningfully extensible and customizable.

Extensibility requires its own design and foresight. A truly flexible system should allow multiple modifications by multiple independent parties. It should provide a way to introspect and deal with the modifications of others. All this should, of course, be done without the user ever having to replicate any existing code.

If one is to have a healthy extension ecosystem, such design is of utmost importance.

Modularity and Minimalism

No hoarding like in Emacs.3 The only reason for this seems to be the assignment of copyright to FSF.

Oh wait, I am sorry, I didn't mean it quite like that.

No HOARDING like in Emacs.

Anything that can reasonably be implemented as an external package – should be.

The core programs and libraries are there for defining the building blocks and the common API, not for acting like a distribution.

As such, the core is just a set of libraries that you load into your image.

Just as well, the core itself should be modular (where it makes sense), so that the user may easily replace any of its parts with someone else's package for a part that he wants replaced.

Same, of course, goes for any backends: they are completely separated from the core and just implement the API requirements.

An Option of Speed

Speed is one of many arguments that proponents of the mainstream languages use when flaming against high-level powerful languages, and quite possibly the only one they are right about (remotely at that).

But even then, Common Lisp lends itself well to user optimizations through its gradual typing system and various declarations, allowing for compile-time optimizations. Possibly, the one essential thing that's beyond the reach of the application programmer is the garbage collector4 And even that might not be forever. CL compilers are getting ever more sophisticated and modular (see SICL, for instance), and, possibly, one day, (some) compiler internals might become user-space hackable just as well as anything else by the means of one of those defacto standards in the future. We haven't known the limits yet..

However, not everything is down to the language. The core libraries may help the user gain speed when it's required. For instance, in Mage, geometrical primitives will be implemented as flexible Knowledge-Representation objects. But, in turn, these KR implementations will rely on the relatively lightweight and simple structs that implement all the necessary functionality. These structs, along with the machinery to manipulate them, will all be exposed to the users, in case they want to make a million triangles or something.

So, some design choices need to consider and account for performance, especially when it comes to graphics.

Homogeneity

The core should NOT have a foreign-language plugin/extension API.

A foreign API, no matter how good, can never be powerful enough to offer the original hackability of the image-based system that it exposes. Only the native packages do.

Second, if a foreign-language API were to be introduced, the ecosystem would effectively become fractured, especially so when multiple languages are allowed to use the API.

The motivation for foreign plugin API (such as kind done in vscode and NeoVim) is nothing but opportunistic: as a rule, the goal is to attract more people to write plugins. Plugins attract users. More plugins = more users.

The reality ≠ sunshine, though: the power user, who likes power and typically forms the real core of the development effort, now has to deal with a plethora of (likely inferior, less powerful) languages, because that's what the ecosystem becomes polluted with.

And, what's worse: the power was lost across the gap that the foreign API has created. Just as the foreign plugin doesn't have the benefit of interacting with the native language directly, nor may the user work with the foreign code from within the image. The benefit of an image-based system is thus effectively diminished. Introspection and interoperability die and the system is not live anymore.

Not to mention the fact that foreign languages evolve, break compatibility and sometimes even lose popularity (read: die), so the ecosystem becomes dependent on the forces beyond the original choice of language. The result is a biological bomb with a years-long fuse.

Foreign extension interface creates an artificial division which is ultimately detrimental and unhealthy, and immediately deadly for truly interactive systems. It is simply bad design from the point of view of a power user.

Also, see my opinion on Guile in this article.

There's No Such Thing As Sane Defaults

Among the power users and developers there lives a vision of something akin to a fabled unicorn: sane defaults.

Sane defaults are a hallmark of great software. They tell you what's right and sensible, they show you a way forward.

Well and good, up until one fine day a subject comes up on the mailing list: this once perfectly sane default is now actually insane! A panic and bewilderment ensues. A commotion with some chaos mixed in. But no matter what everybody says or does, or what arguments finally surface, it can only end in one of these two ways:

- Let's break the old behavior and alienate some users.

- Let's stay with the old behavior and keep alienating some other users.

The classic trolley problem.

How about this instead: the user has to pick what he wants.

And with some concept along the lines of named versioned configurations, the process of such customization can even be quite painless. What these configurations would do is hold all the values used to customize the system. These configurations may be supplied either by the package itself or from elsewhere. But they encapsulate all the configurable behavior of a package. And there may be multiple of them. So, the author of the configuration, when he wants to introduce or change a value that breaks some behavior, derives a new one with the version bumped, as a separate object.

A slightly more interesting variation on the idea is to have parameterized configurations which take certain arguments (version could be one) from the user and return a set of values to be finally applied to the configured environment.

When the user installs a package, all he has to do is pick a version (or a label such as "latest") among the available configurations.

This way no one can blame the author of the configuration for breaking things, not when done in the name of progress anyway.

Configurations force the user to be responsible for their setup.

So, no more arguing about keybindings. No more arguing about how some behavior is obviously better than some other behavior, but, see, changing it will anger a few mythical mammoths, so that's why nothing is ever going to improve and that's why we are gonna be stuck on this one bad thing forever.

The only good defaults are no defaults.

And if you don't like someone else's configuration, just build your own and distribute it. And since configurations can layer, you need only specify the things you want to change.

I discuss how configuration might be implemented in Fern in a later section.

Power

Power is the one real first-class citizen here.

All the points that you just read above are but the reflections of this tenet.

If you can't easily modify your program, you have just lost out on some power.

If you have to restart to make something work, your power is effectively diminished.

If you can't make something run fast enough, that's some power gone.

If you have to resort to hacks in order to change some behavioral facet of a live system, guess what, mentally subtract some power from your big tank of POWER.

Power not understood is power lost.

And a power that can't be wielded easily is not much of a power to begin with, but only an illusion of such.

So, that means that simplicity is an implicit requirement, and not only because it makes it easy to reason about things, but also because elegant building blocks tend to underlie powerful systems.

Lego is kind of inspiring in that sense: all you have to do is get a grasp of a few building blocks of various shapes and sizes, and then figure out how to snap them together. Then you can construct just about anything and don't really need any further guidance or having to look up the Lego manual.

Programming is more complex than Lego. But the idea of a few building blocks that snap together can be a source of strength there just as well.

So, the foundational goal of the project is to be the source of power for the power user.

But it's important to understand that power doesn't come from having a general enough framework. It comes from the ability to extend, combine and build upon a small set of primitives to build your own specialized frameworks and tools.

And another point here is: structure. Structure is necessary for the ease of extension and reasoning. The discussion on this with regard to text takes place in the Rune section.

Project's Goals

Before we begin, there's something to be said: what you will find below are designs, and most of them haven't been coded yet.

My goal here is not to tell you every detail (although, there is going to be a bit of detail), but rather for you to understand the capabilities and possibilities of each system, along with its motivation and purpose. I will try to show the most illustrative parts, so you can autocomplete the mental picture for other details and the system as a whole.

I want to share these designs because I find them exciting, and, I hope, so will you.

PS If you are unfamiliar with Lisp syntax, don't feel intimidated. Many constructs here will be unfamiliar to Lispers as well.

Fern: A GUI Toolkit partially implemented

A form of self-repetition, ferns have well served a prime example of fractal-like structures.

They are indigenous to just about every corner of the Earth.

I dig their simplicity.

—

Fern is a toolkit for constructing graphical user interfaces (GUIs).

In the section on power, I have mentioned Lego. So, in Fern, the Lego blocks are schemata and the way you click them together is via constraints.

These concepts are in no way specific to the construction of user interfaces; however, they do seem to be particularly fit for the job. The process of interface-building in Fern is heavily rooted in them.

Let's discuss everything point by point. It may get just a tad technical at times, but it seems worth it to describe everything in sufficient detail. This whole Fern section is the most technically-involved of the article, so if you find it slow-moving, I would still recommend at least skimming through for the general ideas.

(To be honest, I am not all too satisfied with the following explanations. In my opinion, technical explanations should be a part of the environment that they refer to. A live example can often times bring greater and more immediate understanding than a wall of text. In this section, I had to resort to text and just plain, non-live examples. So, please, try not to concentrate on the syntax, but rather on the overall picture, because that's what's important.)

KR: a Knowledge Representation System5 For an extensive overview of kr, see KR: an Efficient Knowledge Representation System by Dario Giuse. Also, Garnet maintains its own version of KR.1 See the home page here. The latest Garnet repository may found here.

kr is a semantic knowledge network, where each object is a chunk of information known as schema.

It's sometimes best to just show an example, because kr is very simple to use. Let's define6 Note that not all code below will work if you want to test it with Garnet's kr. I have changed some syntax for readability. a schema for the mythical Kraken:

(create-schema kraken (:description "A legendary sea monster.") (:victims "Many ships and the souls on them."))

Let's get its description:

(get-value kraken :description)

yields → "A legendary sea monster."

You can easily add a slot to a schema.

(set-value kraken :first-sighted '18th-century)

adds a slot :first-sighted with value '18th-century to the kraken schema.

Now, that's all very interesting, you might be saying now, but so far this looks just like a regular hash map! Well, it kind of is! But, worry not, there's more! How about some multiple inheritance at runtime?

(create-schema mythical-monster (:location 'unknown) (:victims 'unknown)) (create-schema cephalopod (:location 'sea) (:group 'invertebrates) (:size "From 1cm to over 14m long.")) (set-value kraken :is-a (list cephalopod mythical-monster))

:is-a is a special slot that defines a relation between objects, and we have just assigned to it a list of two schemata: cephalopod and mythical-monster. This will make Kraken inherit their slots, with priority given to cephalopod.

Now, the location, (get-value kraken :location), will yield SEA.

Note that both mythical-monster and cephalopod define the :location slot.

The lookup is done as a depth-first search over the defined relations in the object.

Note how victims, (get-value kraken :victims), are not unknown, but rather "Many ships and the souls on them.", which we have specified in the original definition of our schematic Kraken. Setting a slot overshadows the inherited value.

The interesting thing about all this is how you can update the inheritance of a schema at runtime, basically at no cost. Values are never copied in an inheritance relation, they are just looked up. (But it's also possible to set up local-only slots whose values are either copied or set to a default value.)

Another property of kr is that we can add (or remove) slots without ever touching the original definition: we do this simply by the set-value operation. We will get back to this point later when we talk about customization.

kr represents a prototype-instance paradigm of programming. It is an approach to object-oriented programming which says there's no distinction between an object and a class. There's just a prototype, which is an object which can be inherited from.

What can be more object-oriented than that?7 I can't seem to find the source of this quote.

In a conventional OO system, objects have types. But with prototype-OO, all schemata are of the same type schema and are instead differentiated by names and contents.

In fact, a schema may even be unnamed.

Behaviors are simply lisp functions that are stored in slots. That's useful for late-binding.

One may set up hooks that react to value change in any given slot. It will also be possible to add hooks for addition and removal of slots.

There are a few other things in kr, but this should do for a quick overview.

That is all VERY fine, you might, say. But is that all?

Constraints

With Multi-Garnet project8 Multi-Garnet is a project that integrates the SkyBlue constraint solver with Garnet. For a technical report, see: Multi-Garnet: Integrating Multi-Way Constraints with Garnet by Michael Sannella and Alan Borning. and its solver SkyBlue9 See The SkyBlue Constraint Solver and Its Applications by Michael Sannella., it is possible to set up constraints on kr slots.10 Please, note that the code below will not work if you want to test it with Garnet's multi-garnet. I have changed some syntax for readability. Also, multi-garnet integrated with Garnet formulas, with a goal of replacing them. Garnet formulas are done away with altogether in Fern.

Constraints may be used to define a value of a slot in terms of other slots, i.e. outputs in terms of inputs.

Whenever one of the inputs changes, the output is then recalculated automatically.

Constraints keep the slot values consistent. Whenever one value changes somewhere, it might have a ripple effect on a lot of other values. The most widespread antipattern in most GUI frameworks is the handling of changes with callbacks. Constraints provide a declarative way to deal with this - simply declare the dependencies between the slots.

- One-Way Constraints

Consider this rather bland example, where we define

yto be0whenxis less than0.5, and1otherwise.(create-schema stepper (:x 0.75) (:y 0)) (set-value stepper :y# (create-constraint :medium (x y) (:= y (if (< x 0.5) 0 1))))

This is a rather usual schema, until we add another slot,

y#, which holds a constraint that definesyin terms ofx.The names of the inputs,

xandy, refer to the slots that are being accessed,:xand:y.Right after the addition of this constraint,

yhas immediately been recalculated to be1.If we now set

xto0.25, the constraint solver will detect the change and trigger the recalculation, this time yielding0fory.So, the outputs are evaluated eagerly (as opposed to lazily, that is, upon access).

Importantly, the output form may be arbitrary Lisp code.

The constraints also have strength: like

mediumin the case above.There may be multiple constraints defining the value of the same slot, and the strength decides which one to chose. We will discuss strength a bit later.

A constraint needs to be a part of a schema for it to take effect. As such, you can remove a constraint simply by removing the slot or by setting it to

nil.(Also note that slots that contain constraints may be arbitrarily named and don't have to contain a hashtag, like in

y#.) - Multi-Way Constraints

One-way definitions are interesting. But, sometimes, it may be useful to define some things in terms of each other. Let's see a classic example of a temperature converter.

(create-schema thermometer (:celsius -40) (:fahrenheit -40) (:celsius# (create-constraint :medium (celsius fahrenheit) (:= celsius (* (- fahrenheit 32) (/ 5 9))))) (:fahrenheit# (create-constraint :medium (celsius fahrenheit) (:= fahrenheit (+ 32 (/ (* celsius 9) 5))))))

So there are two slots, one for degrees Celsius, one for degrees Fahrenheit. We set up two other constraints, each defining its output's relation to the other variable.

(set-value thermometer :fahrenheit 212)

If we inspect

celsiusnow, we see that it has become100. Similarly, if you changefahrenheit, say, to32,celsiuswill become0.The constraint solver is smart enough to figure out that these two variables depend on each other, so there's no infinite loop of change, and only a single calculation is done. In general, the solver allows circular definitions like that (possibly involving multiple variables).

These two constraints can be batched together like this:

(create-schema thermometer (:celsius -40) (:fahrenheit -40) (:temperature# (make-constraint :medium (celsius fahrenheit) (:= celsius (* (- fahrenheit 32) (/ 5 9))) (:= fahrenheit (+ 32 (* celsius (/ 9 5)))))))

Now, that's shorter!

- Pointer Variables

So, how do we refer to variables in constraints? What can the inputs be? Well, if a slot in your schema contains another schema, you can refer to its slots using pointer variables.

Time for another example! Let's say you live in room, in a country where it can get quite hot at times. We will model the environment as a separate object, and we will include it along with the thermometer in the slots of our

room.(create-schema environment () (:weather :stormy)) (create-schema room () (:thermometer thermometer) (:environment environment) (:window (create-schema nil (:closedp t))))

Since you can't be bothered to buy an air-conditioner, you use the good old window to control the temperature. Of course, there's little point to opening the window when it's too hot outside, so we keep it closed then. We set up this constraint like this:

(set-value room :temp-control# (create-constraint :medium ;; input variables ((window-open-p) (temperature (:thermometer :celsius)) (weather (:environment :weather))) ;; output (:= window-open-p (and (> temperature 25) (not (eql weather :scorching-sunbath))))))

The input variable specification

(:thermometer :celsius)describes a path to thecelsiusslot in thethermometerof the schema where the constraint is placed (roomin this case).You can use such paths when accessing slots of kr schemata:

(get-value room :environment :weather)

Paths may be as long as necessary, but every slot except the last one has to be a schema.11 Arbitrary access for other data structures hasn't been implemented.

The constraint system picks up on the value changes along the path and updates the outputs accordingly. So, if, for instance, the value of the

environmentslot is swapped for another environment, thenwindow-open-pwill be recalculated. - Stay Constraints

A stay constraint is a special kind of constraint that keeps a slot from changing. It looks like this:

(create-stay-constraint :strong :some-slot)

This may be useful when you want to ensure that some value doesn't change, even though some other constraint may have it as an output (but only when it has a lower strength than that of the stay constraint).

- Constraint Hierarchies / Strength

Multiple constraints may output to the same variable: their strength decide which one to compute.

SkyBlue9 See The SkyBlue Constraint Solver and Its Applications by Michael Sannella. constructs a method graph from the given constraints. Based on the constraint strength, this graph is used to determine the method for any given output variable.

Take a look:

(create-schema rectangle (:left 0) (:right 10) (:width 10) ;; ... (:size# (create-constraint :strong (left right width) (:= width (- right left)) (:= left (- right width)) (:= right (+ left width)))))

Setting the value of

rightto 100 (viaset-value12 Evenset-valuehas a strength value associated with it (:strongby default). This doesn't play a role in the example, though.), there are two things that could happen:widthchanges to 100,leftstays the same.widthstays the same,leftchanges to 90.

In this case, the first option will take effect due to the order in which we have specified the constraints (

widthcomes beforeleft).Other than by changing the order, you could control this by creating dedicated constraints for each output and assigning suitable strengths to them. However, there's a simpler way, by adding a stay constraint:

(set-value rectangle :width# (create-stay-constraint :medium :width))

Now, setting

rightto100would keep width at10and changeleftto90.Constraint hierarchies, defined via strengths, allow the programmer to specify what methods have the output priority and allow you to specify rather complex relations between variables.

- Multi-Value Return

Multi-garnet allows multiple variables to be returned. The syntax is:

(:= (x y z) (values 0 1 2))

This may be useful when your function calculates multiple values: you might want to unpack those values into several slots.

For instance, a layout function may produce the layout positions and the resulting size. So, it might be convenient to pack those values into their respective slots right away.

- Laziness not implemented or planned

Stay constraints may be used to achieve a sort of laziness, where you add and remove a stay constraint at runtime. This can be done by hand. However, some convenient way to achieve laziness would be nice to have.13 This could be achieved exactly adding and removing a high-strength stay constraint upon access. However, the values that depend on it, might also need to be recomputed upon access. Marking a slot as lazy would involve embedding information about it into the constraint system for the values that depend on it. It shouldn't be too hairy, but not a walk in the park either. I will probably skip this feature.

This could come in handy for expensive computations.

Note that laziness and the rest of the features in this section haven't been implemented (only yet, in some cases).

- Parameterized Pointer Variables not implemented yet

Pointer variables (described a above) are nice, but sometimes the number of inputs changes, or you don't even know what your inputs are in advance. In that case, you might want to treat your inputs in bulk and work with them in a container. There are plenty of uses for it: later on, in the section on cells, you will see a couple of examples.

But generally, a schema might contain multiple other schemas of some kind, and you might want to calculate something based on some particular slot in each one. Just as well, you might want to access a particular set of objects within a custom container (discussed later in the section on cells).

If all this sounds pretty nebulous, then here's a quick example:

(create-schema some-sand (:grain-1 (create-schema (:mass (gram 0.011)))) (:grain-2 (create-schema (:mass (gram 0.012)))) (:a-rock (create-schema (:mass (gram 4.37)))) (:total-mass# (create-constraint :medium ((m1 :grain-1 :mass) (m2 :grain-2 :mass) (m3 :a-rock :mass)) (:= total-mass (+ m1 m2 m3)))))

Now, that total mass constraint is a totally ugly mess, to be honest. If you add a grain, you have to rewrite the whole constraint. If you decide a little rock isn't good enough for a proper sand pile: rewrite. So, that kind of sucks.

There's a remedy of sorts: you can add constraints programmatically (this is already existing functionality). So, you could generate the constraint on mass whenever a slot is added or removed. This could be done via a hook, or, if a meta-list of all slots is available, then through yet another constraint that takes it as an input and outputs the desirable

:total-mass#.So, you can solve the problem by hand, but most people only have two, and the number of constraints in your system may be many more.

It would be more viable to introduce some syntax for pointer variables that would track slot additions in the schemas along the path.

I don't yet know what the exact syntax will be, there are a few considerations14 The syntax should be extendable to containers other than schemas, allow either predicates or filters, allow either values or pointers, and multiple arbitrary pickers/wildcards along the path.. But the general idea is to have something like

(masses * :mass)as an input and then return(apply #'+ masses). It's work in progress, but the utility is apparent.—

There's a different way to approach generalization of pointer variables and I will discuss it in the section on KR with Generalized Selectors.

- Advice not implemented yet

It should be possible to advice constraints.

Advising a constraint means prepending, appending or wrapping its function in your own code. Multiple parties in different places may advise a single function and the effects will simply stack. Later inspection and removal of advice should be possible.

This notion comes from Emacs and often proves useful for customization.

- Change Predicates not implemented yet

A function may produce an output equivalent to the already-existing value in the slot. This shouldn't trigger a recalculation.

It should be possible to specify a predicate for a slot that would identify whether two values are equivalent and prevent unnecessary recalculation when other slots depend on it.

- An Algebraic Solver not implemented or planned

You might have also noted how there's a slight inconvenience in the Fahrenheit↔Celsius conversion example: you have to define the same algebraic formula twice. Multi-Garnet may integrate other, more general or specialized solvers (such as for linear equations or inequalities).

Layout code, for example, could benefit from such an addition.

Not planned as part of the campaign.

- Asynchronous Execution not implemented yet

Sometimes, you might want to compute something expensive. Asynchronous execution should be an option.

Cells not implemented yet

The centerpiece of GUI-building in Fern is a cell.

Think of a cell as a biological cell, some shape that reacts and interacts with its environment, which is, for the most part, other cells.

A cell has a shape, a position in space, a transformation, a viewport, context (discussed later), configurations (also discussed later) and a few other things.

- The Tree Structure

Unlike a biological cell, a fern cell may contain other cells, thus yielding a tree-like structure.

(create-cell bicycle (:handlebar (create-cell (:is-a (list handlebar)) (:shape #|...|#) (:angle 0))) (:front-wheel (create-cell (:is-a (list bicycle-wheel)) (:shape (make-circle #|...|#)) (:angle# (create-constraint :medium ((wheel-angle '(:angle)) (handlebar-angle '(:super :handlebar :angle))) (:= wheel-angle handlebar-angle))))) #|...|#)

This creates a schema named

bicyclethat:is-acell.15:is-aslot is constrained to append acellto it (even if the user supplies his own:is-a). The constraint may be removed if undesired, of course. It contains a few other cells within, as slots.A cell's

:superslot stores the cell that contains it and is automatically reset upon being placed into another cell (or moved out of one). If a cell has no super, it's called a root cell.:front-wheeland:handlebargotbicycleautomatically assigned to their:superslots.Bicycle, on the other hand, has it's

:partsslot populated with its cellular slots:'(:front-wheel :handle-bar #|...|#). If you add a:framecell to the bicycle cell, or remove:front-wheel, the parts will be updated accordingly.16 This is simply done via slot-removal/addition hooks, available to the user.So, in order for you to build a tree of cells, you just place cells within other cells: all the bookkeeping is taken care of.

Shape of the Bicycle

You might wonder: what is the shape of

bicyclefrom the example above?By default, the shape of a cell is defined to be the bounding rectangle of the union of the shapes of its

:parts.How is this accomplished? You might notice that if you simply constrained

:parts(or a list of values of parts) and then change the shape of some part, the constraint won't pick up on the change, because the value of:partsitself wouldn't change.Instead, this is accomplished via parameterized pointer variables, or by generating a constraint based on parts manually.

- Containers

Now, let's get something out of the way.

If you want to store a bunch of cells in a list or a vector, or what have you, what do you do? 'Cause you need to do that if you are generating cells.

Well, you can set up a cell, put all those cells in the data structure of your liking, and draw them yourself. Then you would just have to keep the meta slots like

:superand:partsup to date.Instead, you could use containers and that's exactly what they do: they are cells defined for some common data structures, and they keep metadata up to date.

A container gives you direct access to its data structure (simply held in a slot) and eliminates the work on your behalf.

—

There's a different way to approach containers and I will discuss it in the section on KR with Generalized Selectors.

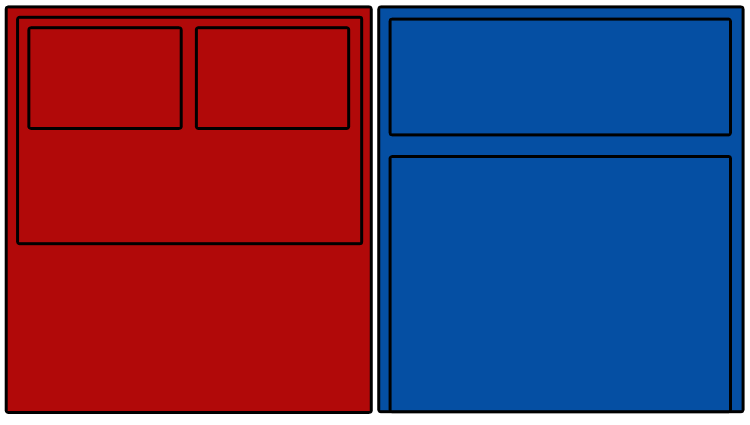

- Contexts

Suppose you have two branches of cells, and you want all the cells in one branch to have a red background, but have a blue background in the other branch.

One way to do that would be to walk each branch and set each cell's background one by one. But then, every time you add a cell to a branch, you would have to set its background as well. Not to mention that you would have to keep track of what branch has what background color.

The way Fern solves the problem is through contexts.

Each cell has its own context in its

:contextslot. A context is a KR schema.What's interesting about a context is it inherits from the context of its super (to repeat: the cell to which the current cell belongs). The super's context, in turn, inherits from its own super. The cell at the root of the tree dynamically inherits from the global context object.

So, the contexts along the branch of cells form an inheritance tree. Note that the values are not copied, and, so, the context are quite cheap.

But what you can do now is say

(set-value :some-cell :context :colorscheme :bg +red+)

And

some-cellalong with all its parts (constituent cells) and their parts will all have a red background (since the drawing functions rely on context to provide such information).All of this is done dynamically, so if you move a cell into another cell, its context inheritance will change (because this is accomplished simply by constraining the

:is-arelation).Context itself may have other schema within it (e.g.

:colorscheme,:font-settings), which in turn also inherit from the namesake slots in the super's context. - No Windows

Well, you might be itching to draw some stuff by now. For that we need to create a window.

Or do we?

Because we could simply say that if a cell has no super (thus making it a root of the tree), and if that cell is visible, display it as a window in your windowing system. Or as a workspace in a desktop environment. Or as a tab in a browser17 Not that anyone would want such a thing..

A cell with no super may be invisible by default. This is easily modeled as a constraint, so, once it becomes a part of another cell, it automatically becomes visible; this would prevent accidental creations of windows.

Of course, you can still give hints to the backend responsible for maintaining the window by assigning values to slots that it understands (like

:name). And vice versa, the backend could place its own properties or constraints in a given cell (perhaps, to allow some native behavior).So, we can define a window to be just a visible cell without a super. This makes it very easy to place your cell in some other cell.

As such, it seems quite satisfactory that a notion of "window/frame object", so ubiquitous in GUI frameworks, can be done away with fairly easily.

- The Drawing Process

The tree of cells contains all the information for its rendition to the screen. And we want it rendered automatically, yet leaving all control to the user should the need arise.

How should we do this?

One obvious way would be to walk the tree every screen frame and call a draw function of each cell. That would be quite wasteful, because you would have to be doing this 60 times a second and some cells may be expensive to render and there may be a lot of cells.

Instead, what if each cell would have a texture18 Or, rather, an abstraction of a texture over some real GPU texture. that would be constrained as an output of its parts and its own shape? That's a step in an interesting direction. Now, whenever some cell changes, its texture is re-rendered and the change ripples back to the root. So, unless there are changes, no frivolous drawing has to take place.

This approach is also a bit wasteful when you have many small cells: after all, each cell would need its own texture. More than a memory issue, however, it is an issue of extraneous draw calls for rendering those textures to their super's texture.

So, the first way has no caching, and the second way is full of caching, and neither is ideal. We need a mixture of the two, a way to choose between immediate drawing and cached drawing for any given cell. Ideally, we could hit a switch on a cell which would decide what kind it is. We also want to leave the user a way to control the process.

How this is done exactly is not too important for our discussion, but with a few constraints,

:context, and a couple of slots, it should be rather approachable. And the whole process will be out on the surface for the user to tweak and modify.As for custom drawing (like drawing a non-interactive circle), it is as simple as

(draw (make-circle ...)))). Thedrawmethod relies on:context, so you don't have to pass extraneous information like viewport or default color or such. (There will be a macro to modify a context locally, though.) - Sensing the Environment

One may set the slots of any given cell, and the cell may react to that by setting up its constraints on those slots. The events can be useful as well, and the cell can process them in the same manner.

For instance, whenever a user issues a key press, an event will be issued, which may then be passed to the active root cell (root cell means a cell without a super). The root cell may then pass it to its branches (or consume it), and so forth. By pass, I mean, there's simply an

:eventslot, which will be assigned to, and which may be constrained (as an input).If a history of events is needed, a constraint can build it with an output into a separate slot (e.g.

:recent-events).How should the input be dealt with, exactly? Well, state machines let you easily model many types of user input (and, probably, some other kinds of activities as well). Since kr can represent graphs, it is suitable for building state machines on the go. So, in your constraint, you can create a state-machine schema and use its slots as outputs, and the events as inputs.

In other words, there's no need for any special input-handling abstraction: kr+constraints should be enough to make a cell interact with its environment.

Configurations not implemented yet

Prerequisite section: There's No Such Thing As Sane Defaults.

Say, we need some sort of configuration objects in our system. A configuration doesn't have to do much: it has to be able to add, replace or modify some slots of another schema to which it is applied. Ideally, a configuration should be reversible, and multiple of them could be layered.

If we want reversibility (a sort of undo mechanism for customization), we can't simply replace the local slots of a configured schema. How about inheritance? It's reversible. However, inheritance doesn't override local values, which is what we need a configuration for in the first place!

Instead, we want the configured schema to behave as if it inherited from itself after being configured: (:is-a (list configuration configured-schema)).

Of course, you could easily create another schema with that inheritance relation, but we want to original schema modified, we don't want a new one.

So, that's what we will define configurations to be: an inheritance relation that makes the configured schema behave as if it inherited from the configurations and, lastly, its old self. Something like this:

(:is-a (list configuration-1 configuration-2 ;; ... configured-schema))

So, the configuration slots take precedence over the configured slots. And if you don't want some slot to take precedence, you may simply remove that slot from the configuration.

The way configurations will be done within kr is an implementation detail and shouldn't really incur an efficiency penalty any more than adding any other inheritance relation would. But we get what we set out for: reversibility and layering, all achieved in a rather simple, almost familiar manner.

We need one more point to address: versioning. To that end, the configuration author implements a configuration function to return a specific configuration schema based on what we have asked this function to give us (like a specific version).

And, finally, the act of configuration is accomplished via a :configured-by slot and looks something like this:

(create-schema bicycle (:is-a (list vehicle)) (:configured-by (list (get-configuration bicycle-breaks :version 1.17.0))))

So, to summarize, configurations

- are simply KR objects used to customize other KR objects,

- are parameterized and versioned,

- may be applied partially and dynamically,

- may be layered,

- are reversible,

- are modeled just like an inheritance relationship for a new object, which in itself is rather simple to understand and makes them predictable.

Configurations for cells may even be set up contextually: just set up a constraint that appends a configuration to a schema based on its cell type.

So, the necessity of configurations have a lot to do with the importance of circumventing the need for defaults. Nearly all Fern objects are schema, including global settings, and contexts, and cells. But they also help with distributing, testing and storing your own, well, configurations of the system.

Sometimes, bulk configuration may be required (to be applied to several schemas at once). Or, perhaps, a configuration may need to define or redefine an external function upon application, or do some action beyond the schema. The configuration system will be designed for that and won't be limited strictly to kr.

Linear Algebra

APL stands for A Programming Language. It is array-oriented and uses a somewhat very cryptic, but concise, syntax to manipulate arrays in a very efficient manner. You can take a look at the available functions in this document: Dyalog APL Function and Operators, and here's a cool APL demo video. There's also APL Wiki.

Basically, APL provides a neat way to work with arrays, and that means linear algebra, which is an important foundational stone for graphical applications: vectors/points, transformation matrices, etc.

Fern uses April, which is a project that brings APL to Common Lisp. It is a compiler for APL code (to be more precise, April is pretty close to the Dyalog dialect).

(april-f "2 3⍴⍳12")

0 1 2 3 4 5

or, in CL terms:

#2A((0 1 2) (3 4 5))

So, arrays in April are just usual CL arrays, holding either floats or integers (or 0/1 booleans).

I prefer readability, so Fern has the translations for all the APL functions provided by April, so now you can write the expression above like this:

(reshape #(2 3) (i 6))

i generates numbers from 0 to 5, and reshape reshapes them into a 2-by-3 array. So, that's pretty neat!19 It's important to note that using April compiler directly with multiple operations in a row may generate better-optimized code, though.

KR with Generalized Selectors not implemented yet

One interesting direction to think about is to imagine KR objects not as at the level of hash tables, but at the level of their slot access semantics, while leaving storage details to the object itself.

So, for example, you could say that you want a vector to represent your schema.

Then you could define the meaning of accessing :0 in that schema (e.g. returning the first slot), or any number :x.

So, these would be the selectors, and it would be necessary to define their semantics of access and setting.

This way, the containers as described previously are replaced with a much more powerful alternative; all the user has to do is describe any container/data-structure/object in terms of these selectors, and the container would be held in place of the hash table.

And then, the usual definition of a slot would simply define a namesake selector.

Also, this paves a natural way for defining the semantics of generalized pointer variables. So, a selector for * could be defined as all the elements of the data structure.

So, a generalization like that can be really powerful and give a high level of homogeneity. And note that these would still work just as well with constraints.

This is not implemented yet, but it's probably one of the first things that will have to be addressed.

Geometry

Geometrical shapes are business as usual. They are implemented as structs20 Manipulation is meant to be functional, and all slots are read-only., but each shape will have a correspondent schema for easy manipulation.

Functions like intersect-p and draw are generic21 For comfort and speed, I will likely be using polymorphic-functions for this. and will work with either representation.

2D API not implemented yet

Fern will define a specification and set a few requirements for the graphical backends to implement.

The user is expected to install the backend separately from the core.

The specification will include some pretty basic drawing functions, and functions that work on multitudes of shapes. It will also define a few kinds of events, including those for the keyboard and the mouse.

For color, Fern will likely absorb one of the existing Common Lisp color libraries.

The specification will be guided by SDL (a platform-independent graphics library), and, in fact, SDL will be the first backend to be implemented.

—

Native look is beyond the scope of the campaign. However, the backend may itself provide configurations for any existing cells for the user to apply to make them look and behave appropriately.

3D API not implemented or planned

It's not out of the question that at some point in the future, it might be very handy to acquire a language for building 3D scenes. Obviously, it would have to integrate with the existing 2D primitives.

3D is not a part of the campaign.

Text Rendering not implemented yet

The API for rendering text will mimic some basic Pango.

Clicking It All Together

If you made it this far, congratulations! Fern is the most technical section of the bunch. Perhaps that's because much of it is implemented already (KR, constraints, basic geometry, and linear algebra).

Sans a few implementation details, at this point, you probably know about Fern about as much as I do.

So, what do we have?

We have a fernic tree full of cells and it is an organism which renders itself to the screen, each cell responsible for its own processing and interaction with the world around it (but may be influenced from the outside).

And if you wanted some geometrical shape to be interactive, you just make a cell of it and place it somewhere.

If you have to render a million interactive shapes, you set up a container, also a cell, and teach that cell to interact with that container, and set up all the necessary structure to deal with large amounts of drawn material efficiently.

An interesting outcome of all this cell-tree business is that the displayed graphics corresponds to the cells that render them, so pixels attain semantics beyond the visual aspect.

This yields not just self-documenting, but self-demonstrating, semantically-explorable user interfaces.22 Or, if you lose the marketing speak, this just means that pixels map back to objects, which map back to code, which may, in turn, be associated with some documentation.

Fern isn't so much about building GUIs as it is about providing a good way to maintain a living, dynamic tree-like structure, every branch of which you can freely fiddle with. Constraints let you do that declaratively, and contexts let you localize your changes, and configurations provide for a rather painless cross-user customization experience.

With prototype OO, the classes are objects, and the objects are classes, so, in case of kr, the boundary between the data structure and its own definition disappears.

KR makes it easy to remove or add any slots, constraints and behaviors. Inheritance relations allow for dynamic value propagation. Contexts let us tweak whole branches at once, and configurations get us rid of the problematic notion of defaults. Unlike conventional OO, kr grants a great deal of runtime flexibility.

All facets of Fern majorly boil down to kr, constraints and the few bare-bones concepts that we define with their help, namely configurations and contexts. Beyond that, there are just the event objects, the texture objects, the shapes, and the API for drawing shapes and text to said texture objects. Some linear algebra. That's it.

—

Fern is a spiritual successor to Garnet, a Common Lisp project for building graphical user interfaces (GUIs). Garnet originated at CMU in 1987 and became unmaintained in 1994. It's a great toolkit which was used for many research projects, some of which are still active. I talk more about Garnet in another article.

Both KR and Multi-Garnet8 Multi-Garnet is a project that integrates the SkyBlue constraint solver with Garnet. For a technical report, see: Multi-Garnet: Integrating Multi-Way Constraints with Garnet by Michael Sannella and Alan Borning. may be found in the Garnet repository1 See the home page here. The latest Garnet repository may found here.. Or just see the Wiki.

Rune: A Platform for Constructing Seamless Structural Editors

In just a little while I will explain what the words in the title mean exactly. But that's in just a little while. Because for far too long has the computer interaction been plagued by the tyrannical idea of plain text…

… but what is plain text, really?

One way to look at plain text is how you store data: as human-readable text.

Lots of things are plain text on your computer. Source code is plain text. Notes are plain text. Dotfiles, command history, even some rudimentary databases may all also be stored as plain text.

Plain text, in that understanding, is something that feels safe and accessible. Whatever complex configuration a program requires, you know all you need is a text editor to modify it. The whole idea has to do with storage and a guarantee of ubiquitous, easy access.

The other way is to say that plain text is how you work with data: as a string of characters.

Unlike the previous (benign) interpretation, this one has been a source of pure evil in lots and lots of our experiences of interacting with computers. And within the realms of this evil interpretation, plain text has been misused and abused to no end.

The common symptom of such abuse is how the receiver of data has to be doing some parsing based on some specific documentation instead of, say, a universal representation23 Such as s-exps or, Stallman save us all, XML.. This misuse is ubiquitous and apparent in how Unix-like programs communicate with the user and with each other: through some ad-hoc textual output, which you have to parse somehow.24 As has been duly noted, Unix pipes are like hoses for bytes.

Now, I have to make the distinction clear: the process of serializing data as text and passing it as a string to another program is not evil in and of itself. It is simply a necessity for badly-integrated dead operating systems (such as all the mainstream ones) where programs have to interact through such barbaric means, but it all has little to do with the plain text inanity that we are talking about here.

What I want to focus on is the fact that in the user-level applications there's no automatic conversion to a structured representation once you receive that output at all. When you type ls in the terminal and get a dump of lines describing the directory structure to you, that dump of lines carries information that you can only infer by the means of parsing. And that's what really is evil.

Now, the example with our 1970s terminal emulators is well-known and everybody knows that the situation is bad. Of course, just as bad is the situation, just as soon has everyone decided to accept it as an inseparable part of their daily lives that can't really be argued against except in some dreams about the far-off bright future.

It's bad, yes, but it's really OK, you see. You can deal with plain text output from some programs just by doing some parsing on it. You can deal with it just fine as you can deal with sed/awk/grep and all that regexp goodness with mad syntax. Mad syntax and bad decisions and manual fiddling with things that the computer should've really been doing for you in the first place.

But do you know where the real  treasure trove of insanity

treasure trove of insanity  is buried?

is buried?

That's right. You guessed it.

Within our precious text editors /( ͡° ͜ʖ ͡°) /.

The Status Quo of Text Editing

So, get your treasure maps and talking parrots and wooden legs ready, we are going treasure hunting.

Your text editor probably has some kind of way to state that a certain file you are working on has some kind of format. In Emacs, that's called a "major mode". For instance: elisp, dired, help, org, whatever.

And what's a major mode, really?

I will tell you what it is. It is but a declaration that the text string25 Emacs people like to call their strings buffers, but that's but a trick of the tongue: a buffer is really no different than a string with some confetti flying around. you are going to work with has a certain kind of structure.

And so you need certain kind of tools and further declarations to deal with that structure. What could that possibly be? Well, it could be something like: a function to select the current function definition. Or: delete the current tree branch in an org-mode string. It's always something to do with the semantics of the immediate characters surrounding the point, all quite primitive stuff really.

And then there's also a need to have a syntax table for the syntax highlighting, which is basically a bunch of rules describing how to indent certain semantically-significant parts of the string and what pieces of that string you want colored.

Strings, strings, etc, etc…

So, did you see what just happened? Your text editor has just taken some data, decided to call it a string, thus denying and ripping it off of its original structure, and then, since the original structure indeed exists and is valuable, it decided to offer some subpar tooling geared specifically for different types of strings. And called it a day!

Somewhere along that way, someone was badly, badly bullshitted. And that someone was you, me, and everybody else who touched any modern text editor.

95% of all user-level operations in a contemporary text editor are accomplished through some kind of regexp trickery. The rest is done by walking up and down the string. Walking and parsing. Parsing and walking. Back and forth, back and forth.

If you know what turtle graphics is, you know how modern editors work with text.

How Much Structure is Out There Anyway?

A lot. Have a tiny taste:

Notes. These tend to have a tree structure. What does your plain text editor do when you want to remove a section at point? It scans backwards starting at the point, inspecting each character, until it finds a demarcation that scans: I am a headline. A couple of stars in the beginning of line. An easy regexp. Not too expensive, so it doesn't really matter, does it? Not until you try to build a navigator for the structure of your tree, anyway.

Well, now, go ahead and make a table in your notes. A bit harder to reason about, but well. Autoformatting as you type is kind of helpful. But a multiline string within a cell? Hmm. No, no, forget about that!

But it's just a plain-text table.

So, what went wrong? Why can't you have multiple lines in a cell?

Answer: because it's too hard.

Try summing all the numbers along a column now. You can do it. Was it worth it? How much scanning did you just do?

Now mark some cell in your table in bold. Since it's just plain text, you have just surrounded your word with a pair of markers like this: *word*.

See, even words have structure. Are words objects? We wouldn't have a word for them if they weren't significant somehow. And that's how I would want to analyze and work with them: as words. For the plain text editor, a word is just a cluster of characters somewhere in a string, and there's a whole pile of mess built on top of that string to convince you otherwise.

- Your word was supposed to be an object that carries information about itself.

- Your table was supposed to be a 2d array.

- Your notes were supposed to be a tree.

- Your table was supposed to be a node in that tree.

- The word was supposed to be a part of a sentence within a cell within your table.

All of that structure is just not existent when you edit as plain text.

Some may say: So? Strings have been working just fine for the most part!

Well, "for the most part" is not good enough.

We get the sense of power from the editors that work with files as strings because, in theory, you could make them do anything (or almost anything, as we will see later). That's a false sense. And the system won't tell you that you need to stop. It will simply say: try harder, do more optimization, do more caching, include more edge cases, fix more bugs, come up with better formatting, turtle around better, etc… All while it gets slower and slower and more bloated and more monstrous. Until it all becomes impractical and then you give up and say it's good enough.

But it isn't enough.

Doing something the wrong way can only take you so far, and we have already seen the limits of it in our text editors. We have hit the ceiling, and there's no "up" if you wish to pretend your data is doing fine as a string.

"Strings are not the best representation of structural data" may seem like a truism. And it absolutely is. But the outcomes of the opposite understanding is ingrained into our reality nonetheless.

So, let's just see what we have ended up with.

The Fatal Flaws of the Data Structures for Generic Strings

The modern text editor will store your file in some kind of a string-oriented data structure. A gap buffer, a rope tree, a piece table. They don't expose even those structures to you (not that it would help much).

Here are the outcomes of "everything is just a string" approach to text editing:

- No querying.

- No trackable metadata.

- No semantic editing.

- No efficiency.

The only way for you to reliably query some structure in the textual information that your file represents is to scan it all the way through. For instance, you may ask of your document with notes: find all sections that have a certain tag associated with them. What does the editor do? It scans the whole damn thing, every time you ask for it.

You could try to map back every removal and edition into a separate data structure. But, then, your editing operations still haven't learned anything about the actual semantics of the document.

Not to mention that the whole concept of constantly reconstructing a structure from its inferior representation is backasswords!

Moreover, incremental caching is hard to get right, especially if you are dealing with structures more difficult than a sequence of words. And don't miss the fact that it is slow, and it will get slower as the file gets larger. A misplaced character may disrupt the whole structure of the document. This is not trivial to deal with. And it is error-prone.

Incremental maintenance of a separate structure based on string edits is hard. That means tracking object identity is just as hard.

And object identity is paramount if you want to associate some external knowledge with a place in your document. Like, for instance, index your content.

By extension, usage by semantic units is not trivial either. Neither is content hiding.

None of it is impossible, though: just hard enough for you not to bother.

Trying to fit a string to another structure or impose a structure on it internally (by carrying around and hiding the markers) is basically Greenspun's tenth rule applied to text editing:

Any sufficiently complicated string-based editor contains an ad hoc, informally-specified, bug-ridden, slow implementation of half of a structural editor.

One way to look at it is, when working within the string paradigm, you can never treat anything as an object. Objects let you carry information and unbind you from the necessity to encode the object within its presentation. Objects let you have composition, complex behaviors and complex ways of interaction. None of this is very reasonable within the string-paradigm. This would require identity tracking, and if you are doing that with strings, you are really just beginning to reimplement structural editing, but without having the slightest chance of getting it right.

Unstructured data leaves you with just one option: turtle-walking.

Is turtle-walking fast?

I am glad you asked! Because I can't even begin to explain how painful to me are the micro-freezes when I am working with text. I can't perceive lag very well. I don't think anyone does. The lag seems to feed back into my brain, which starts predicting the lag…

Brain on lag.

My fingers start typing slower, I have an uneasy feeling, nausea of sorts, when there's lag. Text editing isn't supposed to have lag. And it's in the simplest of stuff, and all over the place.

I am basically used to raggedy scroll by now. As much as anyone can get used to it. I think I have accepted it. I tried to make it faster, it's all still there.

Typing? Typing isn't as nearly as snappy as it could and should have been.

Multiple cursors? Absolutely not.

It's a widespread wisdom in software engineering that programs run slower than possible because of: bad abstractions, bad algorithms and bad data structures. Everybody knows that. And yet, here we are, tolerating bullshit gap buffers and piece tables and rope trees for text storage along with a bunch of clever algorithms and hacks that optimize this mess.

Here, I found an uncut gem for you, dear reader. I mean, just look at this:

[VS Code] Bracket pair colorization 10,000x faster

Unfortunately, the non-incremental nature of the Decoration API and missing access to VS Code's token information causes the Bracket Pair Colorizer extension to be slow on large files: when inserting a single bracket at the beginning of the checker.ts file of the TypeScript project, which has more than 42k lines of code, it takes about 10 seconds until the colors of all bracket pairs update. During these 10 seconds of processing, the extension host process burns at 100% CPU and all features that are powered by extensions, such as auto-completion or diagnostics, stop functioning. Luckily26 Emphasis mine., VS Code's architecture ensures that the UI remains responsive and documents can still be saved to disk.

CoenraadS was aware of this performance issue and spent a great amount of effort on increasing speed and accuracy in version 2 of the extension, by reusing the token and bracket parsing engine from VS Code. However, VS Code's API and extension architecture was not designed to allow for high performance bracket pair colorization when hundreds of thousands of bracket pairs are involved. Thus, even in Bracket Pair Colorizer 2, it takes some time until the colors reflect the new nesting levels after inserting { at the beginning of the file:

While we would have loved to just improve the performance of the extension (which certainly would have required introducing more advanced APIs, optimized for high-performance scenarios), the asynchronous communication between the renderer and the extension-host severely limits how fast bracket pair colorization can be when implemented as an extension. This limit cannot be overcome. In particular, bracket pair colors should not be requested asynchronously as soon as they appear in the viewport, as this would have caused visible flickering when scrolling through large files. A discussion of this can be found in issue #128465.27 That's: Issue Number One Hundred Twenty Eight Thousand Four Hundred Sixty Five. Jesus.

[…]

Without being limited by public API design, we could use (2,3)-trees, recursion-free tree-traversal, bit-arithmetic, incremental parsing, and other techniques to reduce the extension's worst-case update time-complexity (that is the time required to process user-input when a document already has been opened) from O(N+E) to O(log³(N)+E) with N being the document size and E the edit size, assuming the nesting level of bracket pairs is bounded by O(log N)28 Yeah, O(log(N)), biatch!.

Look: if you had the right data structure to store your code within, then, maybe, you wouldn't need very clever optimizations and caching tactics in the first place.

Strings just aren't making it.

General-purpose data structures are not good enough for specialized data.

It almost sounds like a tautology, but it's important that this point be absolutely clear.

But can we work with text differently? Does specialized tooling make sense? You bet!

What We Want Instead

So you are telling me you took some highly structured data that was stored in plain text, foolishly decided to edit it as plain text, and now you have a ton of half-assed tooling that works on strings and is obviously insufficient? What do we do now?

Well the reason all of that happened is because you were too lazy to parse the string into a fitting data structure and implement some common interface to that data structure.

Does that sound too simple? Well, it is that simple!

Let's read that again: let the user use his own data structures.

As we have already noted:

- You store a table in a 2d array. Not a string.

But also:

- A note tree in tree.

- A huge file in a lazy list.

- Live log data in a vector.

- Search results as a vector and recent search results as a map.

- Lisp code in a tree.

And then you represent:

- Note section as an object with a heading and tags and what have you.

- A word as an object with style data and a unique ID within a document.

- A literate program source block as an object storing its source location and resulting output (or history thereof).

- etc, etc.

Bear with me: it doesn't mean added work. It simply means a different kind of work. And much less of it (if you do it right), with more robust results.

That's not quite the end of it, though. We don't just want a tree editor for our notes. We want that tree to freely locate tables within it.

We want to piece together an editor with editors inside.

Because if we only are allowed to employ a single structure for the whole document, it won't help us very much yet.

We want to support embedding.

- A tree of notes will have sections as its nodes.

- A section is an object with a heading.

- A heading is an object with tags.

- A section body includes a list of other objects.

- Those other objects may be tables, paragraphs, pictures, code blocks, really: anything you want.

And a table will then just be a 2d array in memory and its (tabular) cells may contain various other types of objects with their own unique structure.

Every data structure should have a dedicated editor! But more than that we should be able to freely piece these editors together and embed them into each other.

That begs a question: If we piece together a bunch of editors, can they even interoperate?